The Accountability Imperative: Who Owns AI Risk in Your Organization?

Post Summary

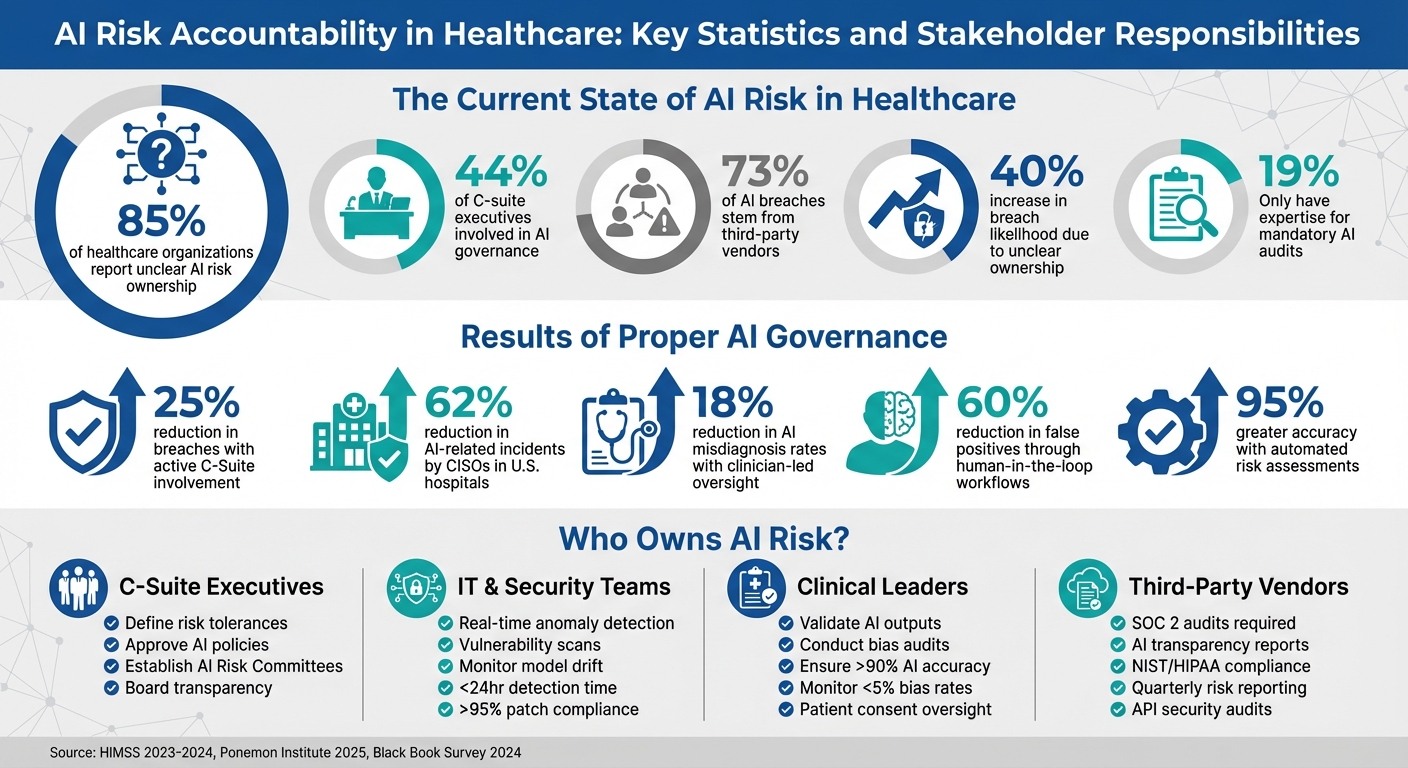

85% of healthcare organizations report unclear AI risk ownership, with accountability currently split equally between CEOs and CISOs at 24% each, and only 44% of C-suite executives involved in AI governance. This ambiguity directly increases the likelihood of breaches by 40%, because teams cannot respond effectively or in a timely manner when responsibility for monitoring, incident investigation, tool approval, and compliance enforcement is not clearly assigned.

The four primary stakeholder groups are C-suite executives including CIOs, CISOs, and Chief AI Officers who define risk tolerances, approve AI policies, and maintain board transparency; IT and security teams who implement real-time anomaly detection, vulnerability scanning, and model drift monitoring; clinical leaders who validate AI outputs against clinical guidelines, conduct bias audits, and maintain human oversight for high-risk decisions; and third-party vendors who introduce supply chain risk through unvetted models, insecure APIs, and improper data handling.

The NIST AI RMF outlines four key functions: Govern, which integrates AI risk into enterprise risk management strategy; Map, which evaluates the operational context of each AI system and assesses impacts on patients and workflows before deployment; Measure, which applies quantitative and qualitative methods to assess the seven characteristics of trustworthy AI including safety, bias, and explainability; and Manage, which allocates resources for risk mitigation and maintains response and recovery plans for AI failures.

Effective August 1, 2024, the EU AI Act classifies AI systems into four risk categories. Healthcare AI used for diagnostics, treatment, or clinical decision support typically falls under the high-risk category, requiring continuous risk management, conformity assessments, detailed technical documentation, and registration in a designated EU database before market release. Non-compliance can result in fines up to 35 million euros or 7% of global annual turnover, whichever is greater.

Organizations must require SOC 2 audits, AI transparency reports, and adherence to NIST standards from all AI vendors. Contracts should mandate quarterly risk score reporting, API audit rights, and 24-hour breach notification requirements. The Ponemon Institute's 2025 report found that 73% of AI breaches in healthcare are linked to third-party vendors, and a 2024 Black Book survey found 40% of healthcare data breaches involved vendor AI tools, establishing vendor accountability as the highest-leverage area for breach risk reduction.

Censinet AI uses machine learning to analyze vendor questionnaires, security evidence, and compliance documents in real time, validating data against NIST and HIPAA standards and reducing assessment timelines from weeks to hours with 95% greater accuracy. A major US health system using Censinet AI reduced per-vendor assessment time from 40 hours to 2 hours across more than 200 vendors, uncovered 15% more high-risk AI integrations related to patient data privacy, and estimated $4.5 million in averted potential breach costs. Organizations using the platform have reported 25% reduction in third-party risk exposure within six months.

AI is transforming healthcare, but with this transformation comes risks - especially when systems fail. The stakes are high: errors in AI-controlled medical devices or cyberattacks on healthcare systems can cost lives. A key question arises: who is responsible for managing these risks?

Key Takeaways:

Solutions:

Clear accountability, structured governance, and proactive risk management are essential for safely integrating AI into healthcare.

AI Risk Accountability in Healthcare: Key Statistics and Stakeholder Responsibilities

Who is Liable When AI Goes Wrong in Your Hospital?

sbb-itb-535baee

Defining AI Risk Ownership: Core Principles

Laying down clear principles is the first step in managing AI responsibly. These principles provide a unified framework, ensuring that everyone - from top executives to frontline workers - understands their role in maintaining accountability. They form the backbone of the responsibilities outlined below.

Accountability and Clear Roles

Unclear responsibilities often lead to gaps in oversight. For instance, only 44% of C-suite executives are involved in shaping AI-related processes, and just 33% contribute to governance aimed at reducing AI risks. Even ownership of AI accountability is split, with 24% of organizations assigning it to the CEO and another 24% to the CISO [3].

To avoid such ambiguity, organizations must clearly outline who is responsible for key tasks like monitoring AI performance, investigating incidents, approving new tools, and ensuring compliance. With well-defined roles, teams can respond more effectively and in a timely manner.

Transparency and Governance

Establishing accountability is only part of the equation - strong governance is equally important. Many AI systems operate as "black boxes", relying on statistical patterns rather than transparent rules, which makes it hard to explain their decisions [1]. As Claude Mandy, Chief Evangelist for Data Security at Symmetry Systems, explains:

The 'black box' nature of many AI systems clashes with our obligation to explain decisions that affect people's lives

.

To address this, organizations should implement robust governance frameworks. These might include forming cross-functional AI Risk Committees that bring together experts from security, legal, clinical, and ethics teams. Preparing for mandatory AI audits is also crucial, especially since only 19% of organizations currently have the expertise to handle them [3]. Transparency in the AI supply chain is another key factor - healthcare providers, for example, need confidence in everything from the training data used to the platforms where AI tools are deployed.

Safety and Bias Prevention in AI Systems

Ensuring the safety of AI systems and minimizing bias is essential. Errors in AI often arise from system malfunctions or complex interactions between humans and technology [1]. Compromised training data, or "data poisoning", is another significant threat [4]. Additionally, AI systems sometimes generate inaccurate or misleading outputs - so-called "hallucinations" - that can appear highly credible [1].

To combat these risks, organizations must conduct rigorous, ongoing bias testing. This involves analyzing AI outcomes across demographic groups - such as race, socioeconomic status, and geography - to prevent the technology from deepening existing disparities [2]. Synthetic data can be a useful tool for identifying potential biases, such as racial or regional imbalances, before deploying AI systems. The goal is to move from reacting to incidents after they occur to proactively managing risks. As Gianmarco Di Palma and colleagues highlight:

Clinical Risk management is a solid basis for the integration of AI and blockchain in healthcare... ensuring regulatory compliance on the one hand and supporting the implementation of more robust controls within complex healthcare contexts

.

Assigning AI Risk Ownership Across Your Organization

To effectively manage AI risks, organizations need to assign clear responsibilities at every level. This not only ensures accountability but also helps build a strong foundation of AI governance, particularly in healthcare cybersecurity. A striking statistic highlights the urgency: 85% of healthcare organizations report unclear AI risk ownership, which increases the likelihood of breaches by 40% [10]. The solution? Assigning specific roles and tasks to key stakeholders to ensure no critical areas are overlooked.

C-Suite: Executive Oversight and Governance

The responsibility for AI risk management starts at the top. CIOs, CISOs, and Chief AI Officers play a central role by defining risk tolerances, approving AI policies, and maintaining transparency with the board. According to a 2023 HIMSS report, healthcare organizations with active C-Suite involvement in AI oversight saw a 25% reduction in breaches, thanks to proactive governance frameworks [5][6]. These leaders often establish AI Risk Committees that meet quarterly, using KPI dashboards to monitor governance progress and breach-related costs. Notably, CISOs in U.S. hospitals have reduced AI-related incidents by 62%, as reported in a 2024 HIMSS survey [11]. Once the governance framework is set, IT and security teams take over to implement technical defenses.

IT and Security Teams: Technical Risk Management

IT and security teams are the backbone of AI risk mitigation, focusing on technical safeguards. Their work includes real-time anomaly detection integrated with SIEM systems, regular vulnerability scans, and monitoring for model drift or adversarial attacks. For instance, a Mayo Clinic case study revealed how automated monitoring helped detect a 15% increase in anomalous AI predictions. By isolating affected systems, they successfully prevented potential data breaches [7]. The adoption of zero-trust models for AI systems has surged by 50% since 2023, reflecting a growing emphasis on rigorous security measures. These teams aim to keep the mean time to detect vulnerabilities under 24 hours and maintain patch compliance rates above 95% [6]. Meanwhile, clinical leaders focus on ensuring AI aligns with patient care standards.

Clinical Leaders: Ethical and Operational Oversight

Clinical leaders are tasked with ensuring that AI enhances patient care without sacrificing ethical standards. Their responsibilities include validating AI outputs against clinical guidelines, conducting bias audits for diagnostic tools, and integrating AI into workflows while maintaining care quality. A NEJM Catalyst survey found that clinician-led oversight reduced AI misdiagnosis rates by 18%, thanks to patient-focused validation processes [5][6]. These leaders track AI accuracy, aiming for benchmarks above 90%, and monitor bias rates, keeping them below 5%. They also oversee informed consent for AI-driven decisions and ensure human oversight for high-risk AI models. However, effective accountability also requires managing risks introduced by external vendors.

Third-Party Vendors: Supply Chain Risks

Vendors often pose significant risks through unvetted AI models, insecure APIs, or improper data handling. According to the Ponemon Institute's 2025 report, 73% of AI breaches in healthcare are linked to third-party vendors [12]. To address this, organizations must require SOC 2 audits, AI transparency reports, and adherence to NIST standards from all vendors. A 2024 Black Book survey revealed that 40% of healthcare data breaches involved vendor AI tools [6]. For example, Cleveland Clinic has achieved zero AI-related incidents in 2024 by holding vendors accountable for API audits and requiring quarterly risk score reporting [7]. The demand for vendor risk management platforms has grown 35% annually, driven by regulatory requirements like HHS AI guidelines and the need for continuous oversight.

Building Governance Frameworks for AI Accountability

Once you've assigned clear responsibilities for managing AI risks within your organization, the next step is to formalize those responsibilities through structured governance frameworks. These frameworks prevent accountability from becoming scattered and ensure that AI risks are managed in an organized, consistent manner. Industry-standard frameworks provide healthcare organizations with proven methods to address AI risks while keeping up with evolving regulations. Essentially, such frameworks integrate previously defined roles into formal risk management processes.

Using the NIST AI Risk Management Framework

The NIST AI Risk Management Framework (AI RMF) outlines four key functions: Govern, Map, Measure, and Manage. Together, these functions help establish executive oversight, frame AI systems within their operational context, assess their trustworthiness, and allocate resources for mitigating risks [14][15].

By establishing clear guidelines, this framework supports responsible innovation while keeping risks in check. It also emphasizes collaboration across departments - clinical, IT, legal, marketing, and operations - to ensure accountability [13]. When selecting AI vendors, prioritize companies that are transparent about how their systems work. Avoid solutions that are opaque, even if they seem advanced [13]. The framework’s credibility is underscored by contributions from over 240 organizations spanning industry, academia, and government [14].

Applying Tiered Risk Approaches from the EU AI Act

While the NIST framework provides a comprehensive risk management structure, the EU AI Act offers a tiered regulatory model. Effective as of August 1, 2024, the EU AI Act classifies AI systems into four risk categories: Unacceptable, High, Limited, or Minimal/No Risk [16]. Healthcare AI used for diagnostics, treatment, or decision-support typically falls under the High-Risk category due to its potential impact on patient safety. For these systems, organizations must:

The EU AI Act has a global ripple effect, much like GDPR did for data privacy. Non-compliance with the Act’s provisions, such as the prohibition of certain AI systems, can result in steep fines - up to €35 million or 7% of global annual turnover, whichever is greater [16]. To ensure compliance, healthcare organizations should conduct a "Go/No-Go" analysis for each AI system, documenting its purpose and operational context. For lower-risk applications, like patient-facing chatbots, transparency obligations require informing users when they are interacting with AI.

Creating Human Oversight and Incident Response Plans

Human oversight is critical when using high-risk AI in healthcare. Clinicians must have clear protocols for overriding AI recommendations to reduce bias and prioritize patient safety. Establishing "human-in-the-loop" processes ensures that decisions remain under human control. Additionally, incident response plans should address AI-related failures by including measures like "safe failure modes" and mechanisms to deactivate or override the system immediately [14]. Continuous monitoring and formal incident reporting are also essential to detect issues like model drift or performance degradation during post-market use.

Building diverse oversight teams is equally important. Teams that include a mix of backgrounds and expertise are better positioned to identify unintended consequences and biases in AI systems [14]. All oversight processes should be thoroughly documented, including criteria for decisions, escalation procedures, and audit trails.

"Risk management frameworks are in the category of '-ish,' which means that organizations can be 'compliant-ish,' or somewhat compliant. That is worlds better than complete noncompliance."

Scaling AI Risk Management with Censinet RiskOps

Managing AI risks across hundreds of vendors and internal systems can quickly become overwhelming, even for healthcare organizations with robust staffing. Censinet RiskOps™ tackles this issue by blending AI-powered automation with human oversight, allowing healthcare providers to scale their risk management efforts without compromising on precision or accountability. Here’s how automation, expert input, and real-time dashboards work together to streamline risk management.

Automated Third-Party Risk Assessments with Censinet AI™

Censinet AI™ simplifies the complex process of vendor risk assessments by employing machine learning to analyze questionnaires, security evidence, and compliance documents in real time. By validating data against standards like NIST and HIPAA, it slashes assessment timelines from weeks to mere hours, delivering 95% greater accuracy [18]. For instance, a major U.S. health system used Censinet AI™ to automate risk assessments for over 200 vendors, reducing the time needed per vendor from 40 hours to just 2 hours. Additionally, the platform uncovered 15% more high-risk AI integrations related to patient data privacy, enabling proactive measures that averted potential breaches, saving an estimated $4.5 million [5].

Beyond speed, the platform generates risk scores and comprehensive reports on AI vulnerabilities, such as model bias and data leakage. Healthcare organizations using Censinet AI™ have reported a 25% reduction in overall third-party risk exposure within just six months, along with measurable ROI of $1.2 million in annual savings for large hospital networks [6].

Human-in-the-Loop Automation for Accountability

While automation accelerates processes, Censinet AI™ incorporates human-in-the-loop workflows to ensure critical decisions are guided by expert judgment. High-risk issues - like ethical biases in diagnostic AI models - are flagged by the system but require approval from designated experts for escalations, remediation, or risk acceptance [7]. This hybrid approach ensures complete traceability with audit trails and reduces false positives by 60% through iterative learning.

Organizations can customize role-based workflows, where IT teams handle technical risks and clinical leaders evaluate ethical concerns. AI confidence thresholds determine when human intervention is necessary, maintaining a balance between speed and accuracy. Deployment is quick, typically taking 4–6 weeks, and ongoing monitoring helps enforce a zero-tolerance policy for biased AI outputs [8].

AI Risk Dashboards for Real-Time Governance

Censinet RiskOps™ also provides centralized, interactive dashboards that give healthcare organizations real-time visibility into AI risks. These dashboards display critical data like risk heatmaps, vendor scores, and trend alerts in formats familiar to U.S. healthcare teams - complete with USD cost projections and MM/DD/YYYY date formats [19][9]. Shared views and comment threads allow for cross-team collaboration, enabling swift decision-making.

For example, if a dashboard shows a 15% increase in third-party AI vulnerabilities, it can trigger immediate alerts to the C-Suite, leading to decisive actions like vendor terminations within 24 hours [9]. The platform also automates tiered risk evaluations based on established frameworks, making it possible to manage third-party vendor risk for over 1,000 vendors without the need for custom development.

Conclusion: Building a Culture of AI Accountability

AI accountability in healthcare isn't just a box to check - it’s a critical necessity. It demands clear ownership, structured governance, and the right tools tailored to the complexities of the industry. As Matt Christensen, Sr. Director GRC at Intermountain Health, aptly states:

Healthcare is the most complex industry... You can't just take a tool and apply it to healthcare if it wasn't built specifically for healthcare

.

To navigate this complexity, organizations need enterprise-wide visibility, frameworks like the NIST AI Risk Management Framework and the HSCC SMART toolkit, and automated oversight solutions like Censinet RiskOps™. These elements don’t just reduce compliance headaches - they turn AI challenges into opportunities to drive strategic growth.

Achieving this requires more than just tools and frameworks. It demands precise role definition and seamless collaboration across all levels of the organization - executives, technical teams, and external partners. When these roles are supported by human-in-the-loop automation, they create a robust accountability structure that ensures resilience and adaptability.

At its core, AI accountability relies on human expertise. While technology can streamline processes and provide data-driven insights, only humans can interpret the nuances of that data, make ethical decisions, and ensure AI is used responsibly in sensitive healthcare environments. By defining roles clearly, adopting proven frameworks, and using tools designed specifically for healthcare, organizations can confidently embrace AI while maintaining trust, adhering to regulations, and upholding ethical standards.

FAQs

Who should ultimately own AI risk in a hospital?

AI-related risks in hospitals should be the responsibility of the organization's leadership, including executive teams and governance committees. These leaders must ensure proper accountability and oversight while implementing risk management frameworks. This includes tackling critical concerns such as data security, compliance, and ethical considerations surrounding AI use.

What does 'high-risk AI' mean for healthcare teams?

In healthcare, the term high-risk AI applies to technologies that could endanger patient safety, compromise data security, or fail to meet regulatory requirements. These risks can manifest in various ways, such as incorrect diagnoses, harmful treatment recommendations, breaches of sensitive patient data, or biased algorithms leading to unequal outcomes.

Addressing these challenges demands robust oversight. This includes implementing clear policies around data privacy, ensuring algorithms are transparent, and maintaining thorough monitoring processes. Frameworks like those from NIST can play a crucial role in keeping AI systems safe, ethical, and in line with regulations like HIPAA and FDA standards.

What should we require from AI vendors to reduce breach risk?

To lower the risk of breaches, it's essential to ensure AI vendors adopt stringent data security practices. They should also comply with relevant regulations, such as HIPAA, and offer clear assurances regarding their system's performance and efforts to reduce bias. Additionally, having solid incident response plans and continuous monitoring in place strengthens accountability and reduces potential weak points.

Related Blog Posts

- The Healthcare AI Paradox: Better Outcomes, New Risks

- Board-Level AI: How C-Suite Leaders Can Master AI Governance

- The AI Risk Professional: New Skills for a New Era of Risk Management

- Boardroom to Bedside: Making AI Governance Everyone's Responsibility

{"@context":"https://schema.org","@type":"FAQPage","mainEntity":[{"@type":"Question","name":"Who should ultimately own AI risk in a hospital?","acceptedAnswer":{"@type":"Answer","text":"<p>AI-related risks in hospitals should be the responsibility of the organization's leadership, including executive teams and governance committees. These leaders must ensure proper accountability and oversight while implementing risk management frameworks. This includes tackling critical concerns such as data security, compliance, and ethical considerations surrounding AI use.</p>"}},{"@type":"Question","name":"What does 'high-risk AI' mean for healthcare teams?","acceptedAnswer":{"@type":"Answer","text":"<p>In healthcare, the term <em>high-risk AI</em> applies to technologies that could endanger patient safety, compromise data security, or fail to meet regulatory requirements. These risks can manifest in various ways, such as incorrect diagnoses, harmful treatment recommendations, breaches of sensitive patient data, or biased algorithms leading to unequal outcomes.</p> <p>Addressing these challenges demands robust oversight. This includes implementing clear policies around data privacy, ensuring algorithms are transparent, and maintaining thorough monitoring processes. Frameworks like those from NIST can play a crucial role in keeping AI systems safe, ethical, and in line with regulations like HIPAA and <a href=\"https://www.fda.gov/\" target=\"_blank\" rel=\"nofollow noopener noreferrer\">FDA</a> standards.</p>"}},{"@type":"Question","name":"What should we require from AI vendors to reduce breach risk?","acceptedAnswer":{"@type":"Answer","text":"<p>To lower the risk of breaches, it's essential to ensure AI vendors adopt stringent data security practices. They should also comply with relevant regulations, such as HIPAA, and offer clear assurances regarding their system's performance and efforts to reduce bias. Additionally, having solid incident response plans and continuous monitoring in place strengthens accountability and reduces potential weak points.</p>"}}]}

Key Points:

Why does unclear AI risk ownership increase breach likelihood and what does current ownership distribution look like?

- 40% breach likelihood increase – The correlation between unclear AI risk ownership and breach likelihood is quantified at a 40% increase, establishing organizational ambiguity as a direct and measurable patient safety and security risk rather than an abstract governance concern.

- Fragmented C-suite ownership – AI accountability is currently split with 24% of organizations assigning it to the CEO and 24% to the CISO, with no dominant organizational model, while only 44% of C-suite executives are involved in shaping AI-related processes and only 33% contribute to governance aimed at reducing AI risks.

- Audit readiness gap – Only 19% of healthcare organizations currently have the expertise required to handle mandatory AI audits, establishing a capability gap that unclear ownership compounds by leaving no clear accountable party for audit preparation and response.

- Black box accountability conflict – The opaque nature of many AI systems that rely on statistical patterns rather than transparent rules conflicts directly with the obligation to explain AI-driven decisions affecting patient lives, making accountability assignment a governance necessity rather than an optional organizational design choice.

- Supply chain opacity – Transparency in the AI supply chain including confidence in training data origins and deployment platforms requires assigned accountability at the vendor management level that many organizations currently lack, leaving supply chain risk unmanaged by default.

- Cross-functional committee gap – Forming cross-functional AI Risk Committees that bring together experts from security, legal, clinical, and ethics teams is identified as the structural accountability solution, but only organizations that have explicitly assigned ownership have the organizational mandate to form and sustain such committees.

What are the specific responsibilities of each of the four AI risk stakeholder groups?

- C-suite governance responsibilities – CIOs, CISOs, and Chief AI Officers define risk tolerances, approve AI policies, and maintain transparency with boards. Healthcare organizations with active C-suite involvement in AI oversight achieved a 25% reduction in breaches through proactive governance frameworks, and CISOs in US hospitals have reduced AI-related incidents by 62% according to a 2024 HIMSS survey.

- IT and security technical responsibilities – IT and security teams implement real-time anomaly detection integrated with SIEM systems, conduct regular vulnerability scans, and monitor for model drift and adversarial attacks. The adoption of zero-trust models for AI systems has surged 50% since 2023. Target metrics include mean time to detect vulnerabilities under 24 hours and patch compliance rates above 95%.

- Clinical leader oversight responsibilities – Clinical leaders validate AI outputs against clinical guidelines, conduct bias audits for diagnostic tools, and integrate AI into workflows while maintaining care quality. Clinician-led oversight has reduced AI misdiagnosis rates by 18% according to a NEJM Catalyst survey. Target metrics include AI accuracy benchmarks above 90% and bias rates below 5%.

- Third-party vendor accountability requirements – Vendors must be required to provide SOC 2 audits, AI transparency reports, NIST compliance evidence, quarterly risk score reporting, and API audit cooperation. 73% of AI breaches in healthcare are linked to third-party vendors, and 40% of healthcare data breaches involved vendor AI tools in 2024.

- Cleveland Clinic vendor accountability outcome – Cleveland Clinic achieved zero AI-related incidents in 2024 by holding vendors accountable for API audits and requiring quarterly risk score reporting, demonstrating that structured vendor accountability requirements produce measurable incident reduction.

- Human-in-the-loop as cross-stakeholder requirement – Human oversight protocols including clear clinician override procedures and human-in-the-loop processes for high-risk AI decisions require coordination across all four stakeholder groups, with C-suite policy authority, IT implementation, clinical execution, and vendor contract enforcement all contributing to a functional oversight system.

How does the NIST AI Risk Management Framework structure accountability for healthcare AI?

- Four-function governance structure – The NIST AI RMF organizes AI risk management around Govern (executive oversight and risk culture), Map (operational context and stakeholder impact assessment), Measure (trustworthiness evaluation against seven characteristics), and Manage (resource allocation and recovery planning), providing a complete accountability lifecycle rather than addressing only one phase of AI risk.

- Govern function as enterprise integration – The Govern function ensures AI risk is embedded in the broader enterprise risk management strategy, creating a risk-conscious culture from leadership down and establishing AI risk as a board-level concern rather than a technical department issue.

- Map function as pre-deployment requirement – The Map function requires evaluation of each AI system's operational context, identification of all affected stakeholders, and assessment of potential impacts on patients and workflows before deployment, establishing a formal pre-deployment accountability gate.

- Seven characteristics of trustworthy AI – The Measure function evaluates AI systems against safety, security and resilience, explainability, privacy enhancement, fairness, accountability, and reliability, providing a structured scoring methodology that quantifies trustworthiness rather than relying on binary compliant or non-compliant assessments.

- Cross-departmental collaboration requirement – The framework explicitly emphasizes collaboration across clinical, IT, legal, marketing, and operations departments for accountability, reflecting the multi-stakeholder nature of AI risk that no single department can manage in isolation.

- 240-organization development credential – The NIST AI RMF was developed with contributions from over 240 organizations spanning industry, academia, and government, establishing its credibility as a consensus governance standard rather than a regulatory imposition.

What does the EU AI Act's tiered risk model require for healthcare AI systems classified as high-risk?

- High-risk classification scope – Healthcare AI used for diagnostics, treatment recommendations, or clinical decision support typically falls under the EU AI Act's high-risk classification due to its potential impact on patient safety, encompassing the majority of clinically significant AI deployments in healthcare.

- Continuous risk management obligation – High-risk AI systems require ongoing risk management throughout their operational lifecycle rather than point-in-time assessment at deployment, establishing continuous monitoring as a regulatory requirement for EU-classified high-risk healthcare AI.

- Conformity assessment requirement – High-risk systems must undergo conformity assessments confirming they meet the Act's requirements before market release, providing a pre-deployment regulatory validation gate analogous to the NIST RMF's Map and Measure functions.

- EU database registration – High-risk healthcare AI must be registered in a designated EU database before market release, creating a public accountability record that extends beyond internal organizational documentation to regulatory transparency.

- Global regulatory ripple effect – The EU AI Act is expected to have global compliance implications similar to GDPR's effect on data privacy, meaning healthcare organizations operating internationally or using AI from internationally operating vendors face EU AI Act exposure regardless of their primary jurisdiction.

- Penalty structure – Non-compliance with EU AI Act prohibitions can result in fines up to 35 million euros or 7% of global annual turnover, establishing a penalty scale that makes compliance investment directly comparable to the cost of non-compliance in financial planning.

How should healthcare organizations build governance frameworks that formalize AI risk accountability?

- Go/No-Go analysis as deployment gate – Conducting a documented Go/No-Go analysis for each AI system, documenting its purpose and operational context, establishes a formal accountability checkpoint before deployment that creates an audit trail and forces explicit accountability assignment.

- Tiered risk model for resource allocation – Classifying AI systems into low, medium, and high-risk tiers enables proportional governance resource allocation, with high-risk systems including diagnostic tools and clinical decision support requiring comprehensive validation, explainability review, and executive approval rather than applying uniform governance overhead to all AI tools.

- Safe failure mode specification – Incident response plans for AI systems must specify safe failure modes and mechanisms to deactivate or override systems immediately, assigning accountability for both the technical implementation of failsafes and the clinical decision to activate them.

- Diverse oversight team composition – Building oversight teams with diverse backgrounds and expertise improves identification of unintended consequences and biases, with diversity of perspective serving as a governance quality control mechanism that homogeneous technical teams cannot provide.

- Compliant-ish as practical starting point – HealthTech Magazine notes that risk management frameworks allow organizations to be compliant-ish, meaning somewhat compliant, which is substantively better than complete non-compliance. Partial governance implementation with a clear maturation roadmap is a defensible and practical starting position for organizations closing the accountability gap.

- HSCC SMART toolkit integration – The HSCC SMART toolkit provides healthcare-specific AI governance guidance that complements NIST AI RMF and EU AI Act frameworks, enabling organizations to apply sector-specific accountability structures alongside the broader regulatory frameworks.

How does Censinet RiskOps address AI risk accountability at the operational scale healthcare organizations require?

- Assessment automation at scale – Censinet AI reduces per-vendor assessment time from 40 hours to 2 hours by using machine learning to analyze questionnaires, security evidence, and compliance documents, enabling risk teams to manage accountability for large vendor portfolios without proportional staffing increases.

- Accuracy improvement through automation – Automated validation against NIST and HIPAA standards delivers 95% greater accuracy than manual review processes, addressing the quality dimension of vendor accountability alongside the efficiency dimension.

- High-risk integration discovery – A major US health system using Censinet AI uncovered 15% more high-risk AI integrations related to patient data privacy than manual processes identified, establishing the platform's capability to surface accountability gaps that existing processes miss.

- Human-in-the-loop escalation – High-risk issues including ethical biases in diagnostic AI models are flagged by the platform but require approval from designated experts for escalations, remediation, or risk acceptance, maintaining human accountability for the most consequential decisions while automating routine assessment work.

- False positive reduction – The hybrid human-in-the-loop approach reduces false positives by 60% through iterative learning, reducing the alert fatigue that causes accountability gaps when teams are overwhelmed by low-quality signals.

- 1,000-vendor management without custom development – The platform enables management of third-party vendor risk for over 1,000 vendors without custom development, providing the scalability that healthcare organizations with large and growing AI vendor portfolios require to maintain accountability across the full scope of their third-party exposure.